Sequent calculus

This article presents the language and sequent calculus of second-order linear logic and the basic properties of this sequent calculus. The core of the article uses the two-sided system with negation as a proper connective; the one-sided system, often used as the definition of linear logic, is presented at the end of the page.

Contents |

Formulas

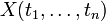

Atomic formulas, written α,β,γ, are predicates of

the form  , where the ti are terms

from some first-order language.

The predicate symbol p may be either a predicate constant or a

second-order variable.

By convention we will write first-order variables as x,y,z,

second-order variables as X,Y,Z, and ξ for a

variable of arbitrary order (see Notations).

, where the ti are terms

from some first-order language.

The predicate symbol p may be either a predicate constant or a

second-order variable.

By convention we will write first-order variables as x,y,z,

second-order variables as X,Y,Z, and ξ for a

variable of arbitrary order (see Notations).

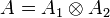

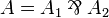

Formulas, represented by capital letters A, B, C, are built using the following connectives:

| α | atom |

|

negation | |

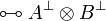

|

tensor |

|

par | multiplicatives |

|

one |

|

bottom | multiplicative units |

|

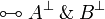

plus |

|

with | additives |

|

zero |

|

top | additive units |

|

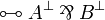

of course |

|

why not | exponentials |

|

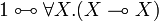

there exists |

|

for all | quantifiers |

Each line (except the first one) corresponds to a particular class of

connectives, and each class consists in a pair of connectives.

Those in the left column are called positive and those in

the right column are called negative.

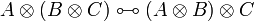

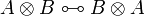

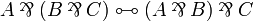

The tensor and with connectives are conjunctions while par and

plus are disjunctions.

The exponential connectives are called modalities, and traditionally read

of course A for  and why not

A for

and why not

A for  .

Quantifiers may apply to first- or second-order variables.

.

Quantifiers may apply to first- or second-order variables.

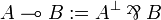

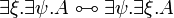

There is no connective for implication in the syntax of standard linear logic.

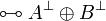

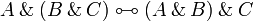

Instead, a linear implication is defined similarly to the decomposition

in classical logic, as

in classical logic, as

.

.

Free and bound variables and first-order substitution are defined in the

standard way.

Formulas are always considered up to renaming of bound names.

If A is a formula, X is a second-order variable and

![B[x_1,\ldots,x_n]](/mediawiki/images/math/3/a/d/3ad43a5c556e19246b33673d45dd904d.png) is a formula with variables xi,

then the formula A[B / X] is A where every atom

is a formula with variables xi,

then the formula A[B / X] is A where every atom

is replaced by

is replaced by ![B[t_1,\ldots,t_n]](/mediawiki/images/math/6/e/4/6e405b754714c3b72973cc1fe9b9ec42.png) .

.

Sequents and proofs

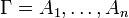

A sequent is an expression  where

Γ and Δ are finite multisets of formulas.

For a multiset

where

Γ and Δ are finite multisets of formulas.

For a multiset  , the notation

, the notation

represents the multiset

represents the multiset

.

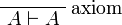

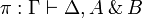

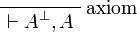

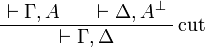

Proofs are labelled trees of sequents, built using the following inference

rules:

.

Proofs are labelled trees of sequents, built using the following inference

rules:

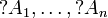

- Identity group:

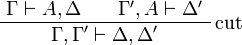

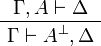

- Negation:

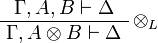

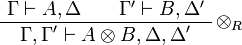

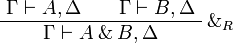

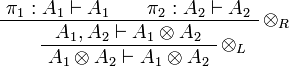

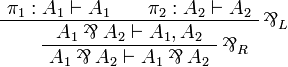

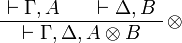

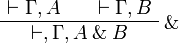

- Multiplicative group:

- tensor:

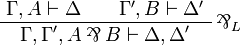

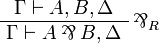

- par:

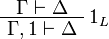

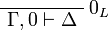

- one:

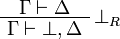

- bottom:

- tensor:

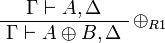

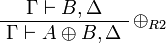

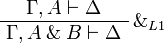

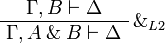

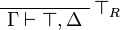

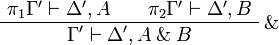

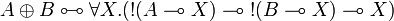

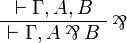

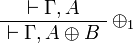

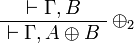

- Additive group:

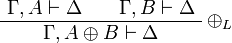

- plus:

- with:

- zero:

- top:

- plus:

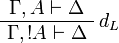

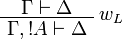

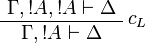

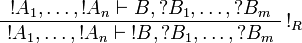

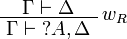

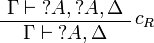

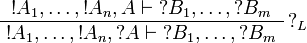

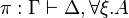

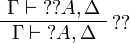

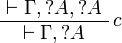

- Exponential group:

- of course:

- why not:

- of course:

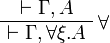

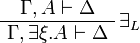

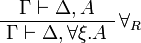

- Quantifier group (in the

and

and  rules, ξ must not occur free in Γ or Δ):

rules, ξ must not occur free in Γ or Δ):

- there exists:

![\AxRule{ \Gamma \vdash \Delta, A[t/x] }

\LabelRule{ \exists^1_R }

\UnaRule{ \Gamma \vdash \Delta, \exists x.A }

\DisplayProof](/mediawiki/images/math/3/3/c/33cd98d69ac858f40d7a231d96037aea.png)

![\AxRule{ \Gamma \vdash \Delta, A[B/X] }

\LabelRule{ \exists^2_R }

\UnaRule{ \Gamma \vdash \Delta, \exists X.A }

\DisplayProof](/mediawiki/images/math/9/6/b/96b94853d6eac425879ceef1583f5e84.png)

- for all:

![\AxRule{ \Gamma, A[t/x] \vdash \Delta }

\LabelRule{ \forall^1_L }

\UnaRule{ \Gamma, \forall x.A \vdash \Delta }

\DisplayProof](/mediawiki/images/math/1/a/3/1a33210d9d315018d6489ce6bab92ea7.png)

![\AxRule{ \Gamma, A[B/X] \vdash \Delta }

\LabelRule{ \forall^2_L }

\UnaRule{ \Gamma, \forall X.A \vdash \Delta }

\DisplayProof](/mediawiki/images/math/7/6/8/768bcb3e8ddcd5c483cd40839b4a035c.png)

- there exists:

The left rules for of course and right rules for why not are called

dereliction, weakening and contraction rules.

The right rule for of course and the left rule for why not are called

promotion rules.

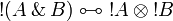

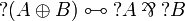

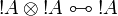

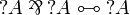

Note the fundamental fact that there are no contraction and weakening rules

for arbitrary formulas, but only for the formulas starting with the

modality.

This is what distinguishes linear logic from classical logic: if weakening and

contraction were allowed for arbitrary formulas, then

modality.

This is what distinguishes linear logic from classical logic: if weakening and

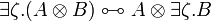

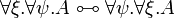

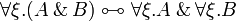

contraction were allowed for arbitrary formulas, then  and

and  would be identified, as well as

would be identified, as well as  and

and  ,

,  and

and  ,

,

and

and  .

By identified, we mean here that replacing a

.

By identified, we mean here that replacing a  with a

with a  or

vice versa would preserve provability.

or

vice versa would preserve provability.

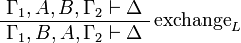

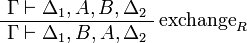

Sequents are considered as multisets, in other words as sequences up to permutation. An alternative presentation would be to define a sequent as a finite sequence of formulas and to add the exchange rules:

Equivalences

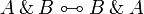

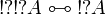

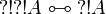

Two formulas A and B are (linearly) equivalent,

written  , if both implications

, if both implications  and

and  are provable.

Equivalently,

are provable.

Equivalently,  if both

if both  and

and

are provable.

Another formulation of

are provable.

Another formulation of  is that, for all

Γ and Δ,

is that, for all

Γ and Δ,  is provable if and only if

is provable if and only if  is provable.

is provable.

Two related notions are isomorphism (stronger than equivalence) and equiprovability (weaker than equivalence).

De Morgan laws

Negation is involutive:

Duality between connectives:

|

|

|

| |

|

|

|

| |

|

|

|

| |

|

|

|

| |

|

|

|

| |

|

|

|

|

Fundamental equivalences

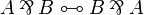

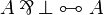

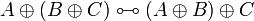

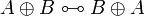

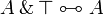

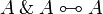

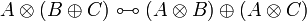

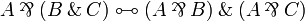

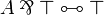

- Associativity, commutativity, neutrality:

-

- Idempotence of additives:

-

- Distributivity of multiplicatives over additives:

-

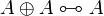

- Defining property of exponentials:

-

- Monoidal structure of exponentials:

-

- Digging:

-

- Other properties of exponentials:

-

These properties of exponentials lead to the lattice of exponential modalities.

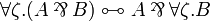

- Commutation of quantifiers (ζ does not occur in A):

-

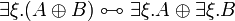

Definability

The units and the additive connectives can be defined using second-order quantification and exponentials, indeed the following equivalences hold:

The constants  and

and  and the connective

and the connective

can be defined by duality.

can be defined by duality.

Any pair of connectives that has the same rules as  is

equivalent to it, the same holds for additives, but not for exponentials.

is

equivalent to it, the same holds for additives, but not for exponentials.

Other basic equivalences exist.

Properties of proofs

Cut elimination and consequences

Theorem (cut elimination)

For every sequent  , there is a proof of

, there is a proof of

if and only if there is a proof of

if and only if there is a proof of

that does not use the cut rule.

that does not use the cut rule.

This property is proved using a set of rewriting rules on proofs, using appropriate termination arguments (see the specific articles on cut elimination for detailed proofs), it is the core of the proof/program correspondence.

It has several important consequences:

Definition (subformula)

The subformulas of a formula A are A and, inductively, the subformulas of its immediate subformulas:

- the immediate subformulas of

,

,  ,

,  ,

,  are A and B,

are A and B,

- the only immediate subformula of

and

and  is A,

is A,

-

,

,  ,

,  ,

,  and atomic formulas have no immediate subformula,

and atomic formulas have no immediate subformula,

- the immediate subformulas of

and

and  are all the A[t / x] for all first-order terms t,

are all the A[t / x] for all first-order terms t,

- the immediate subformulas of

and

and  are all the A[B / X] for all formulas B (with the appropriate number of parameters).

are all the A[B / X] for all formulas B (with the appropriate number of parameters).

Theorem (subformula property)

A sequent  is provable if and only if it is the conclusion of a proof in which each intermediate conclusion is made of subformulas of the

formulas of Γ and Δ.

is provable if and only if it is the conclusion of a proof in which each intermediate conclusion is made of subformulas of the

formulas of Γ and Δ.

Proof. By the cut elimination theorem, if a sequent is provable, then it is provable by a cut-free proof. In each rule except the cut rule, all formulas of the premisses are either formulas of the conclusion, or immediate subformulas of it, therefore cut-free proofs have the subformula property.

The subformula property means essentially nothing in the second-order system, since any formula is a subformula of a quantified formula where the quantified variable occurs. However, the property is very meaningful if the sequent Γ does not use second-order quantification, as it puts a strong restriction on the set of potential proofs of a given sequent. In particular, it implies that the first-order fragment without quantifiers is decidable.

Theorem (consistency)

The empty sequent  is not provable.

Subsequently, it is impossible to prove both a formula A and its

negation

is not provable.

Subsequently, it is impossible to prove both a formula A and its

negation  ; it is impossible to prove

; it is impossible to prove  or

or

.

.

Proof. If a sequent is provable, then it is the conclusion of a cut-free proof.

In each rule except the cut rule, there is at least one formula in conclusion.

Therefore  cannot be the conclusion of a proof.

The other properties are immediate consequences: if

cannot be the conclusion of a proof.

The other properties are immediate consequences: if  and

and  are provable, then by the left negation rule

are provable, then by the left negation rule

is provable, and by the cut rule one gets empty

conclusion, which is not possible.

As particular cases, since

is provable, and by the cut rule one gets empty

conclusion, which is not possible.

As particular cases, since  and

and  are

provable,

are

provable,  and

and  are not, since they are

equivalent to

are not, since they are

equivalent to  and

and  respectively.

respectively.

Expansion of identities

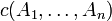

Let us write  to signify that

π is a proof with conclusion

to signify that

π is a proof with conclusion  .

.

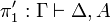

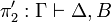

Proposition (η-expansion)

For every proof π, there is a proof π' with the

same conclusion as π in which the axiom rule is only used with

atomic formulas.

If π is cut-free, then there is a cut-free π'.

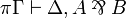

Proof. It suffices to prove that for every formula A, the sequent

has a cut-free proof in which the axiom rule is used

only for atomic formulas.

We prove this by induction on A.

has a cut-free proof in which the axiom rule is used

only for atomic formulas.

We prove this by induction on A.

- If A is atomic, then

is an instance of the atomic axiom rule.

is an instance of the atomic axiom rule.

- If

then we have

then we have

where π1 and π2 exist by induction hypothesis. - If

then we have

then we have

where π1 and π2 exist by induction hypothesis. - All other connectives follow the same pattern.

The interesting thing with η-expansion is that, we can always assume that

each connective is explicitly introduced by its associated rule (except in the

case where there is an occurrence of the  rule).

rule).

Reversibility

Definition (reversibility)

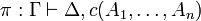

A connective c is called reversible if

- for every proof

, there is a proof π' with the same conclusion in which

, there is a proof π' with the same conclusion in which  is introduced by the last rule,

is introduced by the last rule,

- if π is cut-free then there is a cut-free π'.

Proposition

The connectives  ,

,  ,

,  ,

,  and

and  are reversible.

are reversible.

Proof. Using the η-expansion property, we assume that the axiom rule is only applied to atomic formulas.

Then each top-level connective is introduced either by its associated (left or

right) rule or in an instance of the  or

or

rule.

rule.

For  , consider a proof

, consider a proof  .

If

.

If  is introduced by a

is introduced by a  rule (not

necessarily the last rule in π), then if we remove this rule

we get a proof of

rule (not

necessarily the last rule in π), then if we remove this rule

we get a proof of  (this can be proved by a

straightforward induction on π).

If it is introduced in the context of a

(this can be proved by a

straightforward induction on π).

If it is introduced in the context of a  or

or

rule, then this rule can be changed so that

rule, then this rule can be changed so that

is replaced by A,B.

In either case, we can apply a final

is replaced by A,B.

In either case, we can apply a final  rule to get the

expected proof.

rule to get the

expected proof.

For  , the same technique applies: if it is introduced by a

, the same technique applies: if it is introduced by a

rule, then remove this rule to get a proof of

rule, then remove this rule to get a proof of

, if it is introduced by a

, if it is introduced by a  or

or

rule, remove the

rule, remove the  from this rule, then

apply the

from this rule, then

apply the  rule at the end of the new proof.

rule at the end of the new proof.

For  , consider a proof

, consider a proof

.

If the connective is introduced by a

.

If the connective is introduced by a  rule then this rule is

applied in a context like

rule then this rule is

applied in a context like

Since the formula  is not involved in other rules (except

as context), if we replace this step by π1 in π

we finally get a proof

is not involved in other rules (except

as context), if we replace this step by π1 in π

we finally get a proof  .

If we replace this step by π2 we get a proof

.

If we replace this step by π2 we get a proof

.

Combining π1 and π2 with a final

.

Combining π1 and π2 with a final

rule we finally get the expected proof.

The case when the

rule we finally get the expected proof.

The case when the  was introduced in a

was introduced in a  rule is solved as before.

rule is solved as before.

For  the result is trivial: just choose π' as

an instance of the

the result is trivial: just choose π' as

an instance of the  rule with the appropriate conclusion.

rule with the appropriate conclusion.

For  , consider a proof

, consider a proof

.

Up to renaming, we can assume that ξ occurs free only above the

rule that introduces the quantifier.

If the quantifier is introduced by a

.

Up to renaming, we can assume that ξ occurs free only above the

rule that introduces the quantifier.

If the quantifier is introduced by a  rule, then if we

remove this rule, we can check that we get a proof of

rule, then if we

remove this rule, we can check that we get a proof of

on which we can finally apply the

on which we can finally apply the

rule.

The case when the

rule.

The case when the  was introduced in a

was introduced in a  rule is solved as before.

rule is solved as before.

Note that, in each case, if the proof we start from is cut-free, our

transformations do not introduce a cut rule.

However, if the original proof has cuts, then the final proof may have more

cuts, since in the case of  we duplicated a part of the

original proof.

we duplicated a part of the

original proof.

A corresponding property for positive connectives is focalization, which states that clusters of positive formulas can be treated in one step, under certain circumstances.

One-sided sequent calculus

The sequent calculus presented above is very symmetric: for every left

introduction rule, there is a right introduction rule for the dual connective

that has the exact same structure.

Moreover, because of the involutivity of negation, a sequent

is provable if and only if the sequent

is provable if and only if the sequent

is provable.

From these remarks, we can define an equivalent one-sided sequent calculus:

is provable.

From these remarks, we can define an equivalent one-sided sequent calculus:

- Formulas are considered up to De Morgan duality. Equivalently, one can consider that negation is not a connective but a syntactically defined operation on formulas. In this case, negated atoms

must be considered as another kind of atomic formulas.

must be considered as another kind of atomic formulas.

- Sequents have the form

.

.

The inference rules are essentially the same except that the left hand side of sequents is kept empty:

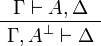

- Identity group:

-

- Multiplicative group:

-

- Additive group:

-

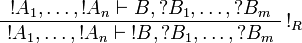

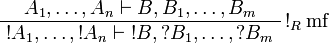

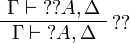

- Exponential group:

-

- Quantifier group (in the

rule, ξ must not occur free in Γ):

rule, ξ must not occur free in Γ):

-

Theorem

A two-sided sequent  is provable if

and only if the sequent

is provable if

and only if the sequent  is provable in

the one-sided system.

is provable in

the one-sided system.

The one-sided system enjoys the same properties as the two-sided one, including cut elimination, the subformula property, etc. This formulation is often used when studying proofs because it is much lighter than the two-sided form while keeping the same expressiveness. In particular, proof-nets can be seen as a quotient of one-sided sequent calculus proofs under commutation of rules.

Variations

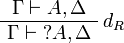

Exponential rules

- The promotion rule, on the right-hand side for example,

can be replaced by a multi-functorial promotion rule

can be replaced by a multi-functorial promotion rule

and a digging rule

and a digging rule

,

without modifying the provability.

,

without modifying the provability.

Note that digging violates the subformula property.

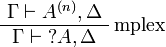

- In presence of the digging rule

, the multiplexing rule

, the multiplexing rule  (where A(n) stands for n occurrences of formula A) is equivalent (for provability) to the triple of rules: contraction, weakening, dereliction.

(where A(n) stands for n occurrences of formula A) is equivalent (for provability) to the triple of rules: contraction, weakening, dereliction.

Non-symmetric sequents

The same remarks that lead to the definition of the one-sided calculus can lead the definition of other simplified systems:

- A one-sided variant with sequents of the form

could be defined.

could be defined.

- When considering formulas up to De Morgan duality, an equivalent system is obtained by considering only the left and right rules for positive connectives (or the ones for negative connectives only, obviously).

- Intuitionistic linear logic is the two-sided system where the right-hand side is constrained to always contain exactly one formula (with a few associated restrictions).

- Similar restrictions are used in various semantics and proof search formalisms.

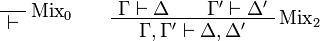

Mix rules

It is quite common to consider mix rules:

![\AxRule{ \vdash \Gamma, A[t/x] }

\LabelRule{ \exists^1 }

\UnaRule{ \vdash \Gamma, \exists x.A }

\DisplayProof](/mediawiki/images/math/2/b/6/2b689e6be9aae26140ef3056c37f576b.png)

![\AxRule{ \vdash \Gamma, A[B/X] }

\LabelRule{ \exists^2 }

\UnaRule{ \vdash \Gamma, \exists X.A }

\DisplayProof](/mediawiki/images/math/9/f/1/9f1d81fcdbb7913777097d7577cf0f1e.png)